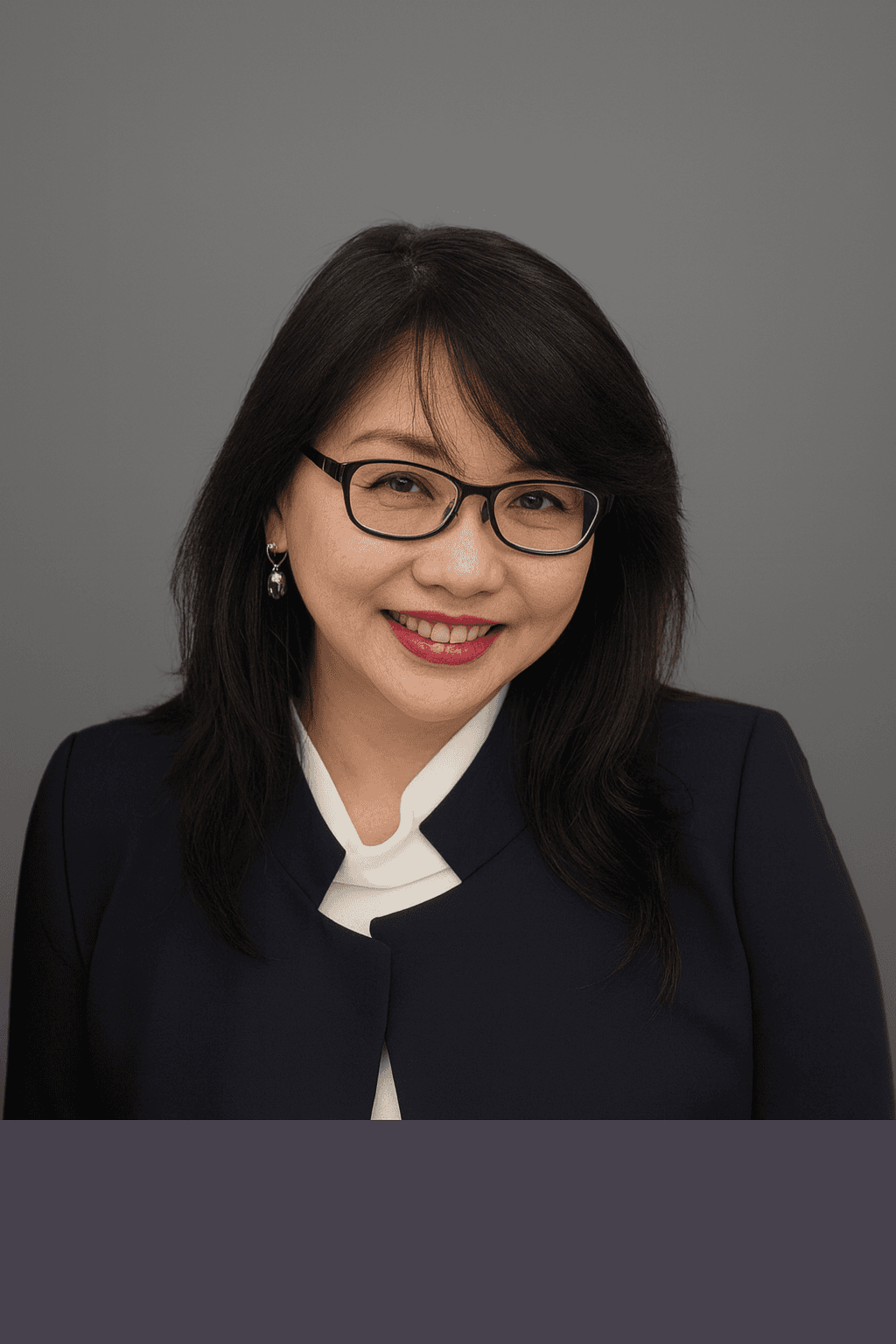

Speaker

Rakesh More

Bio

Program Lead – AI and Finance Portfolio & Application Management A.J. Gallagher | Dec 2021 – Present Lead enterprise AI portfolio strategy integrating machine learning and advanced analytics across insurance business functions. Direct large-scale AI and cloud transformation programs supporting risk assessment, fraud detection, and financial analytics platforms. Implement responsible AI governance frameworks aligned with regulatory standards, including NAIC and data privacy requirements. Manage cross-functional teams delivering enterprise AI platforms across multiple business units. PROFESSIONAL SUMMARY AI and machine learning leader with 20+ years of experience delivering enterprise-scale intelligent systems in the insurance and financial services sectors. Specializing in responsible AI, generative AI governance, and large-scale AI transformation programs across underwriting, claims, fraud detection, and risk analytics. Active researcher in generative AI safety with work focusing on integration of Retrieval-Augmented Generation (RAG) architectures with guardrails frameworks to improve trustworthiness and reliability of large language models in enterprise environments. KEY CONTRIBUTIONS Led enterprise AI strategy initiatives integrating machine learning and generative AI across insurance operations, including underwriting, claims, and fraud detection. Conducted research on the integration of Retrieval-Augmented Generation (RAG) with guardrails frameworks to mitigate hallucinations in enterprise LLM systems. Developed evaluation approaches for LLM reliability, hallucination reduction, and responsible AI deployment in regulated industries. Led cross-functional AI initiatives involving data science, engineering, legal, and compliance teams to deploy responsible AI frameworks aligned with regulatory requirements. Speaker Title - Vision Guardrails for Enterprise AI: Detecting Brand and Identity Misuse Through Embedding Similarity Checks Abstract - With the use of generative AI models in enterprises comes a considerable increase in the possibility of generating images that appear similar to protected assets owned by the organization such as logos, designs, confidential documents, images of employees, or screenshots from internal user interface applications. Although existing text-based guardrail architectures like NVIDIA NeMo Guardrails can control at a prompt level the generation of text, there is currently no defined method to check if generated image contents resemble sensitive data within enterprises. In this paper, we present Vision Guardrails, a security architecture wherein internal enterprise image data will be embedded in a protected vector space, and any image that appears similar to such vectors will be detected.

Applicant email: rakeshmore@gmail.com

Session emphasis

What Rakesh is known for

- Robustness evaluation and threat-aware design

- Security implications across the ML lifecycle

- Actionable guidance for teams building production systems